Bioinformatic analyses are carried out either on individual workstations running Linux or on a dedicated high-performance computing (HPC) system.

HPC details

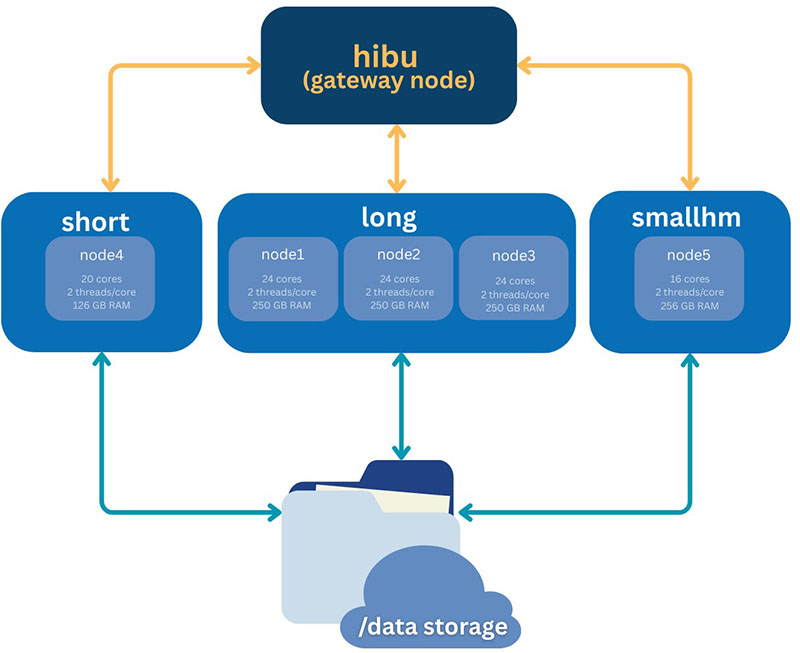

The IMBB HPC infrastructure currently comprises 120 CPU cores in total, distributed across 5 compute nodes, with an aggregate memory capacity of 1.2 TB RAM (up to 256 GB RAM available on selected high-memory nodes). The system provides 42TB+88TB of usable storage, configured as RAID5 and served over NFS to ensure both redundancy and high availability for user workloads. The cluster is designed to accommodate a broad range of computational biology, bioinformatics, and data analysis tasks.

Job scheduling is managed by the Slurm scheduler. A dedicated head (login) node allows users to prepare scripts, submit/cancel jobs, and transfer data. The HPC uses a NAS with a total capacity of up to 42TB+88TB.

Please see the Documentation page: https://www.imbb.forth.gr/en/facilities/Bioinformatics-Unit.15/&tabid=HPC-Documentation.152

Partitions

| long | short | smallhm |

| • Number of nodes: 3 • CPU cores per node: 24 • Threads per core: 2 • Available RAM per node: 250GB • List of node hostnames: node1, node2, node3 | • Number of nodes: 1 • CPU cores per node: 20 • Threads per core: 2 • Available RAM per node: 126GB • List of node hostnames: node4 | • Number of nodes: 1 • CPU cores per node: 16 • Threads per core: 2 • Available RAM per node: 256GB • List of node hostnames: node5 |